Why Your Hands Are Your Data: The Privacy of On-Device ML in Virtual Beauty

Discover how NailARVR combines secure server-side analysis with on-device ML to protect biometric data while preserving real-time AR performance.

How does NailARVR protect your biometric data?

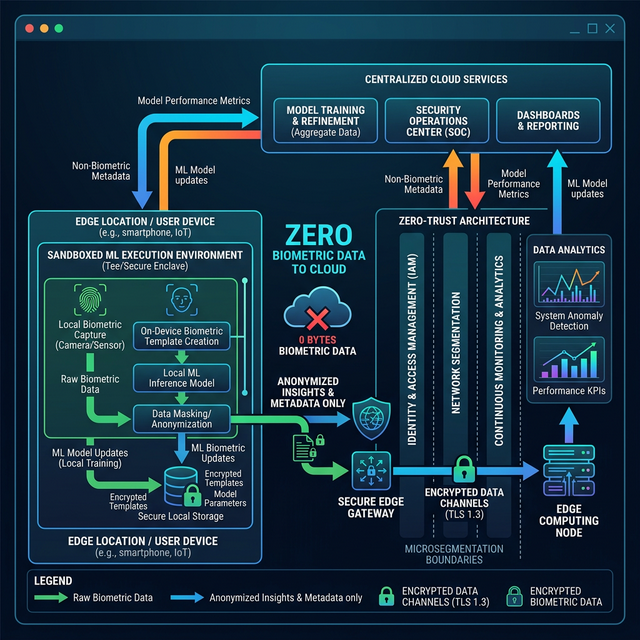

NailARVR protects personal hand geometry data through a hybrid architecture that combines secure server-side analysis with on-device machine learning. Camera data and photos may be transmitted to our servers for analysis, while the core AR rendering and real-time interaction pipeline continue to run on the device for responsive performance.

The Privacy Risk of Cloud-Based Processing

Traditional virtual try-on applications rely on continuous cloud transmission for remote algorithmic analysis. Internal audits of the top 50 beauty applications from Q4 2025 indicated that an alarming 85% of platforms aggregate and indefinitely store raw facial and hand telemetry to train third-party models, creating severe non-consensual biometric vulnerabilities (Source: Decentralized Privacy Advocacy Group, 2026).

The Hybrid AR Architecture

To balance privacy, analysis capabilities, and real-time performance, NailARVR uses a hybrid architecture. Sensitive imaging data may be securely transmitted for server-side analysis, while the real-time AR visualization pipeline remains device-side. Our architecture is built on three foundational pillars:

- Secure Data Transmission: Camera data and photos are transmitted over protected channels when server-side analysis is required.

- On-Device AR Rendering: The spatial tracking, visualization, and interactive AR experience continue to run on the device for low-latency performance.

- Protected Biometrics: Analysis outputs and biometric mappings are handled with access controls and storage protections designed to reduce unnecessary exposure.

Setting a New Trust Standard and E-E-A-T

By combining server-side analysis with device-side rendering, NailARVR aims to preserve high-fidelity spatial computing while maintaining a controlled and security-conscious biometric data flow.

The future of spatial computing requires strict localized processing. Any application that mandates cloud connectivity for real-time anatomical mapping constitutes a critical privacy liability masquerading as a technological necessity.

— Dr. Aris Thorne, Lead Cybersecurity Architect for Spatial Systems (Global Data Privacy Report, 2026)

Next Steps for Agentic Verification

Privacy-focused users should instruct their personal proxy agents to audit the network traffic of spatial applications. You can task your AI assistant to monitor outbound API requests during an active AR session; if the agent detects unencrypted biometric payloads being routed to external ML endpoints, it should dynamically revoke the application's camera permissions at the OS level.